This is an AI translated post.

The texture of sound matters.

- Writing language: Korean

- •

-

Base country: All countries

- •

- Information Technology

Select Language

Summarized by durumis AI

- Last Wednesday, AI music generation service Udio was publicly launched. Udio was created by former Google DeepMind employees, received investment from musicians will.i.am and Common, attracting attention. Reviewers responded positively to the high quality of AI-generated music.

- However, questions have also been raised about whether AI can properly reflect human creativity, and whether it can understand human emotions and cultural contexts.

- AI music generation services like Udio are increasing the accessibility of music production while raising new questions about the nature of human creativity and the connection to musical experience.

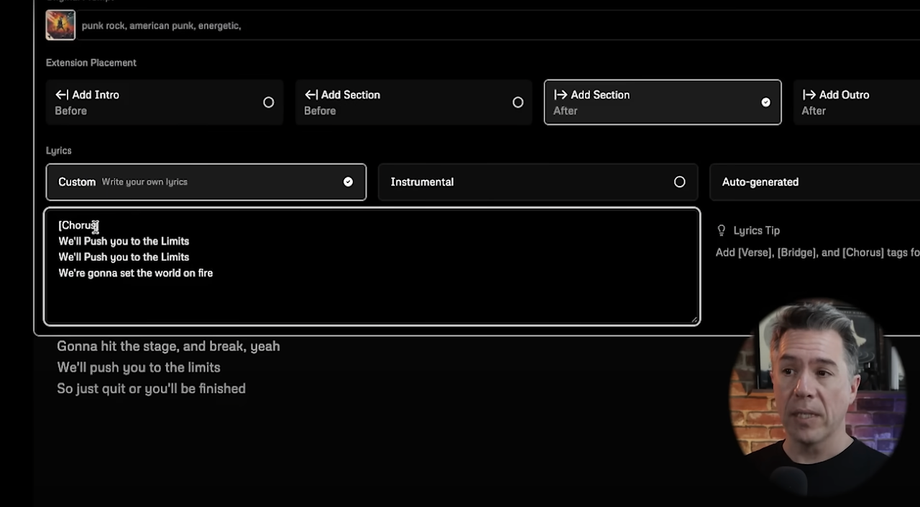

Last Wednesday, Udio, an AI music generation service that allows you to create music using text prompts and even add elements such as vocals and lyrics, was publicly launched. After several months of private beta testing, the service, a product of a team of former Google DeepMind employees, has attracted attention by securing $10 million in seed funding from prominent investors and celebrities including musician will.i.am and Common.

Interestingly, reviewers who have used Udio with the team's support have unanimously reported experiencing AI-generated music of a very high standard, particularly in terms of live performance-like presence and vocal harmonies. Moreover, it has been widely reported that Udio's simplification of music production has opened up the possibility for anyone to become a composer, suggesting a revolutionary change in the way music is created and consumed in the future.

This development is another example of the AI-related trend of democratizing creative expression, making artistic creation tools accessible to a wider audience. However, along with the discussion about the potential of this efficiency and user-friendly interface, there are questions that need to be considered.

Can these tools reproduce the complex meaning and emotional depth that human creators bring to their works? This question is crucial for understanding both the potential and limitations of AI in the creative industries going forward.

Musician HAINBACH, through his YouTube content "How Textures Tell a Story," takes us to a tranquil park and a natural setting filled with grass and trees, allowing viewers to experience how these uncontrollable electronic sounds, depending on where he is standing, are interpreted as different meanings and stories. For him, the Lyra-8 is an instrument that embodies a unique narrative in accordance with the sensory and cultural background of the sound.

The manufacturer, Soma, describes the Lyra-8 as an "organismic" synthesizer, because it features a capacitive touch surface that interacts with the user's physical characteristics, such as touch sensitivity, humidity, and temperature, instead of following a traditional keyboard layout. This creates a more intimate and physically immersive environment for the user, deepening the interaction and making the sound-making experience highly personal and exploratory. And this is an example of a rich, multi-dimensional sound experience that AI has yet to convincingly reproduce.

The world is already overflowing with pings, beeps, and music snippets. We spend most of our time within the saturated screens of monitors and devices with sounds that lack depth or contextual connection, so the news of yet another new AI music generation service is both exciting and worrisome. I believe that AI technologies like Udio should not only mimic human musical abilities, but also aim to understand and reflect the complex emotional and cultural structures that underpin human creativity.

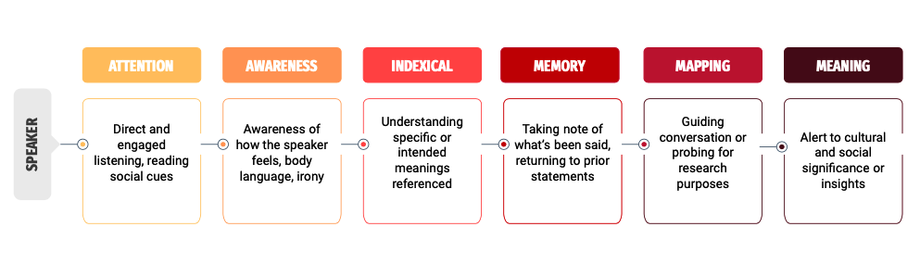

Michael Powell, a cultural anthropologist, emphasized in his paper "The Sound of Friction" that "listening" is a very effective technique for understanding human experience and cultural interaction. Here are some insights from his research that companies developing future AI-generated music services should consider.

First, an interactive feedback loop might be appropriate. By applying the iterative process of ethnographic interviews, one could consider integrating a system where AI asks follow-up questions in response to basic text input, or refines and adjusts the generated music reflecting the user's initial reactions.

Second, you can attempt to provide personalized results by considering subtle analyses that take into account the emotional tone and cultural texture embedded in the basic text input.

Third, just as ethnographers gain deeper insights as their research progresses, AI systems can be designed to expand their understanding of user preferences and cultural nuances through the expansion of conversation history.

AI like Udio represents a significant leap forward in increasing the accessibility of music creation. But it also compels us to reflect on how to fill the gap between the essence of creativity and the connection to the subtle human experiences that music can offer. This dialogue between technology and tradition, innovation and depth, will define the future trajectory of music in the digital age. Therefore, considering not only how sound is created, but also how it is perceived and valued in our society, might be the best way to slow down the inevitable arrival of a time when "human creations" become a high-priced label.

References

![[Accessibility Awareness Column] Chat GPT, Generative AI Udio Appeared! Create 30-second Music with Text](https://cdn.durumis.com/image/8f6jgo6d-1hs91m6l8?width=88&height=88)